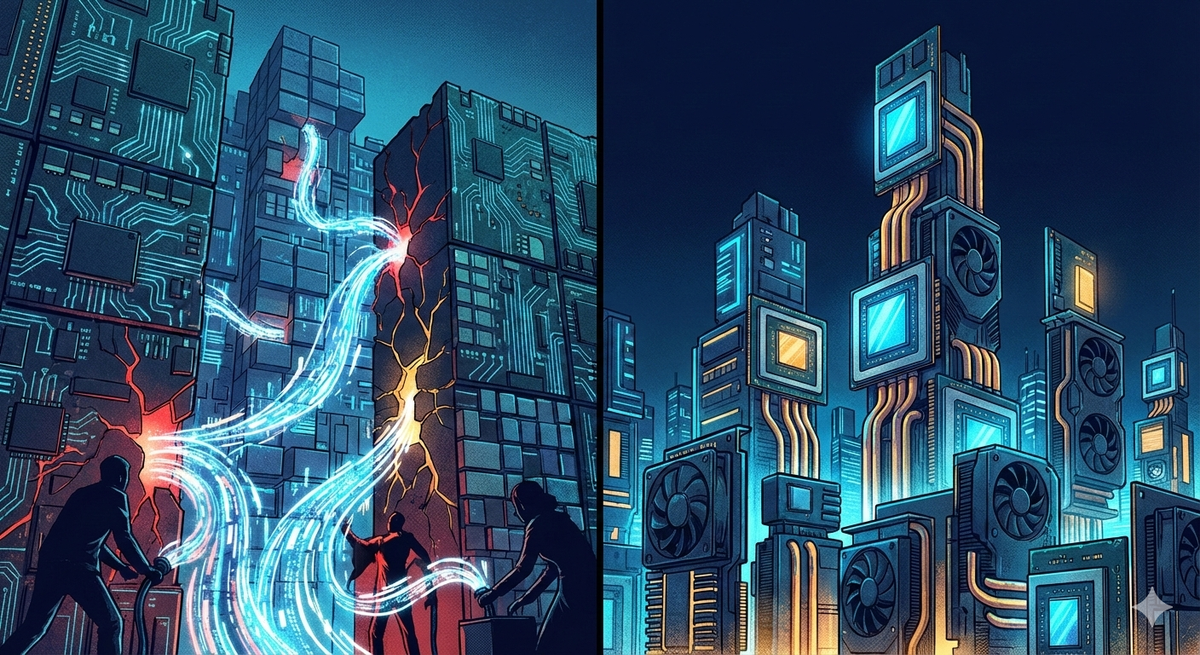

AI Model Theft and the Chip Race: Two Cybersecurity and Technology Trends Reshaping Enterprise Strategy

This week, two major stories shaped the tech industry. First, Anthropic publicly accused three Chinese AI labs of running large-scale operations to steal the capabilities of its Claude model. On the same day, AMD and Meta announced a strategic partnership valued at up to $100 billion, which indicates a significant shift in the AI chip market. While these stories may seem unrelated at first, a closer look reveals a common theme: the AI era is redefining "security" for every organization, from protecting intellectual property to securing the supply chains that support it.

If you are responsible for technology strategy, both developments require your attention. Here's what happened, why it matters, and what actions you should consider taking.

Industrial-Scale AI Model Distillation: A New Breed of IP Theft

What Happened

Anthropic revealed that three Chinese AI companies, DeepSeek, Moonshot AI, and MiniMax, conducted coordinated campaigns to extract abilities from its Claude model using a method called "distillation." Across approximately 24,000 fraudulent accounts, these companies generated over 16 million interactions with Claude, using commercial proxy services to bypass geofencing and regional access restrictions.

The campaigns were both targeted and systematic. DeepSeek concentrated on foundational logic and alignment, while Moonshot AI focused on agentic reasoning, tool use, and computer vision, accounting for more than 3.4 million exchanges. MiniMax was caught in the middle of its campaign before even releasing the model it was training, and swiftly pivoted within 24 hours to utilize capabilities from Anthropic's latest release.

This disclosure follows similar allegations from OpenAI and a report from Google's Threat Intelligence Group, which highlighted distillation attacks targeting Gemini's reasoning capabilities.

Why It Matters for Enterprise Leaders

Model distillation is not a fringe research topic; it represents a significant threat to intellectual property on a large scale. Here's why Chief Information Security Officers (CISOs) and IT directors should take this issue seriously.

First, models created through illicit distillation are unlikely to maintain safety guardrails. This can lead to the proliferation of dangerous capabilities in military, intelligence, and surveillance applications that lack the necessary alignment safeguards. Such developments have serious repercussions for organizations operating in regulated industries or working with government contracts.

Second, the attack surface is expanding. If advanced AI models can be systematically extracted through API abuse, similar techniques could be used to target enterprise deployments. Organizations that run proprietary fine-tuned models, expose internal AI services through APIs, or create AI-powered products face comparable risks.

Third, the geopolitical landscape is shifting rapidly. The U.S. Commerce Department is currently considering stricter export controls for AI chips, and disclosures like this one contribute to that policy discussion. Compliance requirements may change quickly as a result.

What You Should Do

Organizations should assess their AI exposure using a practical framework.

Audit API access controls. If your organization provides AI models via APIs—internally or externally—consider reviewing rate limiting, anomaly detection, and account verification. Fraudulent account creation at scale has been a key entry point for these campaigns.

Implement output monitoring. Monitor for patterns indicating systematic capability extraction, such as high-volume structured queries, sequential probing of model capabilities, and geographic access anomalies. Industry best practices increasingly emphasize behavioral analytics for API traffic rather than relying solely on authentication gates.

Review vendor risk. If you use third-party AI services, understand their disprovider'sdefenses. Ask specific questions: How do they detect abuse? What telemetry do they collect? How quickly can they respond to active campaigns?

Track regulatory developments. The intersection of AI intellectual property protection and export controls is evolving rapidly. Organizations with international operations or supply chains that involve restricted entities should stay informed about developments from the Bureau of Industry and Security (BIS) and relevant congressional committees.

Common Mistakes to Avoid

A common mistake is treating AI security solely as a concern during model training. Distillation attacks can occur at the inference layer, specifically via APIs already in production. Another misconception is that terms of service offer sufficient protection. For example, Anthropic's teAnthropic'stly prohibit such activities, yet these campaigns operated on a large scale before they were discovered. It is crucial that technical controls support and enhance legal frameworks.

AMD's $100 BilAMD'sMeta Deal: What the AI Chip Race Means for Your Infrastructure

What Happened

AMD and Meta have announced an expanded strategic partnership to deploy 6 gigawatts of AMD GPU capacity across Meta's data centers, potentially worth up to $100 billion. This agreement includes custom AMD Instinct GPUs based on the MI450 architecture and 6th Gen AMD EPYC CPUs, codenamed "Venice," with"initial" shipments expected in the second half of 2026. As part of the deal, Meta has received performance-based warrants for up to 160 million AMD shares, which represent roughly 10% of the company. These warrants are structured to vest as AMD's stock price reaches specific thresholds.

Following the announcement, AMD's stock jumped by more than 10%. This comes on the heels of a similar deal AMD made with OpenAI in October 2025, and just weeks after Meta expanded its partnership with Nvidia to deploy millions of additional GPUs.

Why It Matters for Enterprise Leaders

This is not just a story about two large companies. It signals structural changes in the AI compute market that directly affect enterprise procurement and strategy.

Supply chain diversification is real. For years, Nvidia has controlled roughly 90% of the AI accelerator market share. Meta's decision to make a massive parallel bet on AMD signals that hyperscalers are actively de-risking single-vendor dependency. Enterprise organizations should take note.

Pricing pressure is coming. Genuine competition between AMD and Nvidia for hyperscale workloads creates downward pricing pressure that will eventually reach enterprise tiers. Organizations planning AI infrastructure investments in the next 12–24 months should factor this into procurement timelines.

The power conversation is intensifying. Six gigawatts is an enormous amount of data center capacity. As AI compute demands grow, power availability is becoming a first-order constraint on AI deployment. Enterprise leaders evaluating on-premises or colocation AI infrastructure need to factor power planning into their strategy, not just chip selection.

What You Should Do

Diversify your accelerator strategy. If you are deploying AI workloads, evaluate AMD's Instinct alongside Nvidia. The MI450 architecture is purpose-built for large-scale AI training and inference, and AMD's ROCm ecosystem has matured considerably. A dual-vendor strategy reduces supply risk and strengthens your negotiating position.

Revisit your infrastructure roadmap. The pace of chip evolution and the scale at which hyperscalers are deploying mean that capacity planning models from even 18 months ago may be outdated. Benchmark your projected AI compute needs against current-generation hardware, not legacy assumptions.

Engage your procurement team early. Lead times for enterprise AI hardware remain extended. If your organization anticipates significant AI workloads in 2027 or beyond, the procurement conversation should be happening now.

The Convergence: Security and Infrastructure Are the Same Conversation

These two stories share a common theme: AI capabilities are increasingly recognized as high value targets for adversaries willing to invest significant resources to steal them, as seen in AI distillation attacks. Additionally, the AMD and Meta deal illustrates the substantial investment in AI infrastructure, which requires proper security, governance, and strategic management.

For CISOs and IT directors, the message is clear: AI security should not be isolated from AI infrastructure planning. The models you develop or deploy, the APIs you expose, the chips you acquire, and the data centers you manage all contribute to a single risk landscape. Organizations that view AI as a cohesive strategic discipline, rather than a series of disconnected initiatives, are more likely to succeed.

Sources:

- Anthropic: Detecting and Preventing Distillation Attacks

- CNBC: Anthropic joins OpenAI in flagging 'industrial-scale' distillation'campaigns by Chinese AI firms

- TechCrunch: Meta strikes up to $100B AMD chip deal

- Fortune: AMD's $100 bilAMD'sMeta deal marks a turning point for CEO Lisa Su

- CNBC: Meta strikes AI chip deal with AMD

- The Hacker News: Anthropic Says Chinese AI Firms Used 16 Million Claude Queries to Copy Model

- TechCrunch: Anthropic accuses Chinese AI labs of mining Claude