From Photons to Processors: NVIDIA's $4B Photonics Bet and Apple's M5 Signal the Next Era of AI Infrastructure

The central challenge facing AI adoption is now less about intelligence and more about the foundational infrastructure that enables it.While most people focus on model parameters and benchmark scores, two recent announcements have quietly changed the landscape of enterprise technology. NVIDIA invested $4 billion in silicon photonics companies Coherent and Lumentum. At the same time, Apple launched its M5-powered MacBook lineup and updated Studio Displays, both designed specifically for on-device AI workloads.For CISOs, IT directors, and technology executives, these developments represent more than product updates. They are leading indicators of future infrastructure direction. Executives who recognize and plan for this shift will position their organizations ahead of the curve as AI-native operations become the standard.

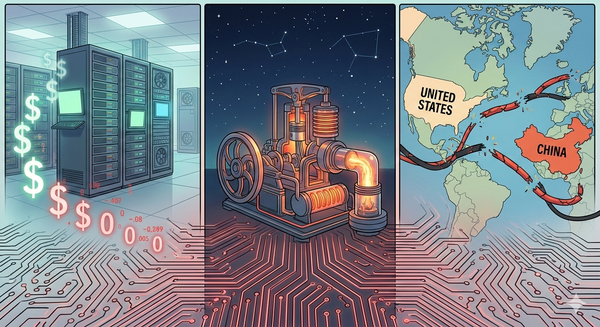

NVIDIA's Photonics Play: Why Moving Data Now Matters More Than Processing It

NVIDIA's $4 billion, split between Coherent and Lumentum, is a strategic supply chain move akin to R&D. Alongside equity, NVIDIA also signed multibillion-dollar deals for advanced laser components and optical networking. Both companies will boost U.S. manufacturing.

The Technical Problem

As AI clusters grow to tens of thousands of GPUs, copper interconnects reach limits in bandwidth, latency, and heat dissipation. Silicon photonics, which uses light rather than electricity to move data, offers lower power consumption, less heat, and much higher speeds.NVIDIA itself stated that "optical interconnect technology and package integration are critical for the continued scaling of AI factories."

Why IT Leaders Should Care

This issue isn't limited to cloud providers. Effects will reach all enterprise technology levels:

- Data center planning: Organizations building or expanding private AI infrastructure need to factor photonic interconnect compatibility into their roadmaps now, not in 2028 when retrofit costs will be significantly higher.

- Power and cooling: Optical interconnects use less energy per bit than electrical ones. For enterprises facing data center power limits—an increasingly common pain point—this shift changes what’s possible.

- With NVIDIA's supply agreements in place, companies should verify if data center and colocation partners plan to support photonics-ready infrastructure.

The Broader Context

NVIDIA's investment portfolio has increased from about $230 million two years ago to over $13 billion by the end of 2025. Their fiscal year 2026 revenue reached $215.9 billion, a 65% increase from the previous year, giving them significant resources to influence the infrastructure market. Global AI-focused data center capacity is expected to nearly triple from 2026 to 2031, and NVIDIA aims to control the connections that enable this growth, not just the computing power.IT leaders: Compare current interconnects to your three-year AI plan. If you have, or plan to have, GPU clusters with more than 100 nodes, include photonic readiness in your next review.

Apple's M5 MacBooks: On-Device AI Moves From Gimmick to Enterprise Reality

Apple's March 3 announcement brought the M5 chip to the MacBook Air, while the MacBook Pro received the M5 Pro and M5 Max — built on Apple's new Fusion Architecture, which combines two dies into a single SoC. The headline number: up to 4x AI performance over the previous generation.

What's Actually New

The M5 Pro and M5 Max include a Neural Accelerator in each GPU core, enabling AI tasks to run alongside graphics processing. Paired with faster memory and Thunderbolt 5, these chips support local model inference, real-time data processing, and on-device AI without relying on the cloud.Apple updated its Studio Displays. The base model is $1,599; the new Studio Display XDR is $3,299. XDR uses mini-LED backlighting and a faster refresh rate for professional and creative users.

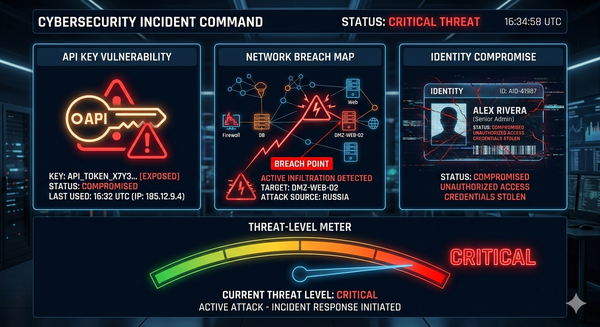

The Security Angle IT Leaders Are Missing

Most reports miss an important point: on-device AI processing has major cybersecurity effects, both positive and negative.The upside:

- Data sovereignty: Sensitive data processed locally never traverses a network. For healthcare organizations under HIPAA, financial institutions under SOC 2, or any enterprise handling PII, local AI inference reduces the attack surface.

- Reduced cloud dependency: On-device processing means fewer API calls to external AI services, reducing exposure to man-in-the-middle attacks, API key compromise, and third-party data handling risks.

- Zero Trust alignment: Local processing fits naturally into Zero Trust architectures, where data minimization and least-privilege access are core principles.

The risk:

- The endpoint becomes the target: As more AI processing occurs on the device, the device becomes more attractive to attackers. Endpoint detection and response (EDR) strategies must recognize that a compromised MacBook with an M5 chip is not just a productivity tool; it is a local AI node with access to sensitive model weights and data.

- Strong on-device AI lets employees run unauthorized models and workflows without IT oversight. Without clear policies, organizations face greater risk.

Update endpoint security policies for on-device AI. Treat devices running local inference as higher-risk, ensure MDM can inventory and control models, and include AI threat vectors in incident response.

The Convergence: Infrastructure and Endpoints Are Telling the Same Story

Read these two announcements together, and a clear pattern emerges: the AI technology stack is bifurcating. Heavy training and large-scale inference will continue migrating toward photonics-connected hyperscale clusters. Meanwhile, inference at the edge, summarization, classification, and real-time analysis are moving aggressively onto the endpoint.For enterprise IT, this means managing both ends of the spectrum simultaneously. Your data center and endpoint strategies are no longer independent line items. They're two halves of the same AI architecture.

Common Mistakes to Avoid

- Treating these as consumer announcements. Both NVIDIA's investment and Apple's M5 launch have direct implications for enterprise infrastructure. Ignoring them because they came from product-focused press cycles is a planning failure.

- Mistake: Delaying interconnect upgrades. Organizations that wait for photonic interconnects to become standard will pay higher prices and face limited supply. Evaluating early gives you an advantage.

- Mistake: Ignoring the security gap at the endpoint. Most enterprise security systems were designed for AI processing in the cloud. The M5 generation changes this assumption.

- Mistake: Not updating your procurement criteria. If your hardware refresh process does not include AI inference at the endpoint and photonic readiness in the data center, your procurement is already behind.

To convert these insights into action, here are immediate steps IT leaders should prioritize this quarter.

- Start your AI infrastructure readiness assessment now to address gaps in data center interconnects and endpoint AI capabilities. Do not delay this step.

- Assess your endpoint security posture immediately, ensuring you categorize on-device AI as a distinct threat. Make policy updates based on these findings.

- Schedule a briefing with your leadership team this quarter to discuss the photonics transition timeline and its impact on capital planning.

- Audit and update your acceptable-use policies now to directly address how employees deploy and handle on-device AI models and data.

- Contact your data center and colocation partners this quarter to learn their timelines and plans for photonic interconnect readiness.

Moving Forward

The organizations that succeed in the AI era will not be those with the largest GPU budgets. Instead, they will be the ones who recognized the infrastructure shift early, from data center photonics to neural accelerators on the desktop, and adjusted their security, procurement, and architecture strategies to match.

Sources

- NVIDIA Invests $4B In Two Silicon Photonics Companies — HPCwire

- Nvidia to invest $4 billion into photonics companies Coherent and Lumentum — CNBC

- Nvidia to Invest $4 Billion in Data Center Optics Companies — Bloomberg

- Apple introduces the new MacBook Air with M5 — Apple Newsroom

- Apple introduces MacBook Pro with all-new M5 Pro and M5 Max — Apple Newsroom

- Apple debuts M5 Pro and M5 Max — Apple Newsroom

- Apple raises MacBook prices across the board as M5 chips signal AI-first strategy — CNBC

- AI Takes Center Stage: Apple Launches M5 MacBook Air, Pro And New XDR Display — Benzinga

- MacBook Air Gets M5, MacBook Pro Gains M5 Pro and M5 Max — TidBITS